I think you (Edmund) are misreading things. Actually, I think everyone is misreading things. Maybe I will, too, but as evidence I submit that despite a top level agreement that communities should be welcoming, there is extensive disagreement about particulars of everything. If most of us aren’t misreading things, why would one get so much disagreement despite apparently having pretty much the same goals?

My suspicion is that the problem boils down to inherent tradeoffs that are sufficiently subtle that it’s possible to be unaware of them; and to the extent that one is aware, the consequences are unpalatable. But not avoidable, at least not without squarely examining the issue and finding some creative way to avoid the issue. (Fair warning: I do not have solutions for these.)

I’m going to try to make these tradeoffs blatant.

The first tradeoff arises from the paradox of tolerance, which I will state as follows.

The Paradox of Intolerance: If you want to ensure a tolerant environment you cannot tolerate intolerance.

This paradox is really depressing. It suggests a proof that comprehensive tolerance is impossible. (I’ll assume everyone can see it.)

In order to avoid accepting the unpalatable conclusion that one supports being intolerant of some pretty okay people, it’s very tempting to (at least subconsciously) either demonize mild cases of intolerance as abhorrent (so you’re not being intolerant of “pretty okay people” but of “awful, toxic scum”); or to decide that the real virtue worth having is fortitude: if you can’t take the heat you should get out of the fire (without worrying overmuch about why things are on fire to begin with).

This brings us to the second point.

Moderators and users are just people: Policies are implemented by people, with all the limitations of perspective and emotion that go along with the human condition; and users are also people, again with the same manner of limitations.

Reliably generating desirable behavior out of noisy components is hard. We’re noisy components. We have limited perspective and often have difficult jobs to try to do with incomplete, unreliable information; and if we do poorly, our social standing may suffer (i.e. other people will blame us for it).

In particular, negative interactions are likely to occur and dominate the discussion (c.f. this thread!); but correcting them by moderation is fraught with peril because moderators also have limited perspectives and have an asymmetric power relationship with users (so that mistakes are less easily corrected by a user “pushing back”; they can’t push as hard).

It’s also quite depressing.

So, the task we face is really tough.

I think it’s worth acknowledging that and reflecting upon it for a while.

Even in real-life communities, creating a healthy environment is challenging, and that is with a whole bunch of instincts that aren’t nearly so strongly at play online.

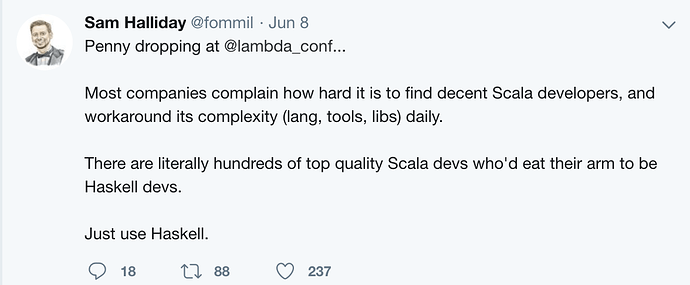

And we can’t just give up, either. Scala has a community whether or not we decide there “should” be one.

We’re playing a game that’s impossible to win and which we can’t stop playing.

Unlike e.g. Pieter Hintjens, I don’t have clear ideas about how to solve these problems, just a hunch that since every extreme is shown to be bad (complete tolerance of arbitrarily bad intolerance => environment is awash with toxic negativity; complete intolerance of anything that smells like “intolerance” => pure groupthink with no room for expression of criticism to improve things; no moderation whatsoever => anarchy, disorder, chaos, noise; unrestrained moderation => dictatorship, well-meaning or not, with widespread fear regarding who will be the next target), the best answers involve some sort of reasonable compromise. And also a hunch that admitting the difficulty of the problem will help us evaluate potential solutions, as we’ll be more likely to have to express and deal with the downside associated with every proposal that is desired to achieve something positive.

There are a lot of strategies that have been tried to create some sort of intermediate solutions. I am not going to list any of them, but they’re worth noticing and thinking about in the context of the tradeoffs that they make.

I do have a few thoughts on the particulars.

-

I agree with @gapjolly that we do need to go down the “how many milli-infractions equate a bannable offense” road because if we don’t, we both ask moderators to do an even more impossible job than usual (integrate milli-infractions and assess if some unspecified threshold has been crossed) and because it opens both the potential for abuse (moderator has a feeling about how bad it is that is shaped by personal biases or whatever but which doesn’t reflect reality and/or an “average” perspective on how bad it is) and the perception of abuse where none has happened (observers have a feeling that it’s not bad yet moderation happened, with no clear data to correct them). I don’t know whether milli-infractions should count, or whether behavior off of a venue covered by the CoC should still count against an individual when in the venue, but I am pretty sure that the actual rules should be stated clearly and that moderation should, to the extent possible, be enacted on the basis of verifiable facts, not vague feelings (even feelings shared by multiple moderators). We’re letting everyone down, moderators and users alike, when disciplinary action has to be enacted on the basis of a hunch because there isn’t anything more to go on.

-

I agree that whatever the rules are, there are going to be corner-cases and moderators probably shouldn’t have to get official clarifications before acting, because some people cause major disruption by sitting right on the most problematic corners for as long as they can. But I think the discretion should be as narrow as possible given the potential downsides (i.e. moderator error + stifling intolerance of non-groupthink).

-

I also agree that a free-for-all is a tremendously unreliable way to build a positive community. The default online is free-for-all; empirically, it rarely works. (In person there is a very strong “we will isolate you socially” and/or “I will punch you” counter-move to disruptive, antisocial behavior; free-for-alls work better there.)

-

“If you don’t like it, try to make your own with different rules” is ultimately true but probably not productive to say, since at the point where a community forks like that, something’s gone really wrong. Also, it already happened with cats/scalaz, and in fact both sets of rules at least kinda work for the people in those communities. (One set may be better than the other, but neither is obviously an absolute disaster.) I think fostering a single community is a worthwhile goal, if at all possible.

-

I think @fommil’s comment about rehabilitation (“Recovery Centers”) is incredibly important. If you look at objective measures of the effectiveness of the justice systems in, say, the U.S. vs. Scandinavia, the punishment-heavy one tends to score poorly (e.g. higher recidivism). Also, “If you do things that we think make the community less useful, we’ll try to help you improve so you can stay and we’re all better off!” is a way more welcoming message to everyone than, “If you’re bad, we’ll ban you.”

-

We can write all the CoCs and rules about moderation that we want, but at the end of the day, someone has to actually implement it. If we expect particular people to do the job, we should listen very carefully to what they say about what does and doesn’t work for them. The CoC might be somewhat aspirational, but it shouldn’t be divorced widely from practice or you lose the transparency that both helps prevent mistakes or abuse, and helps prevent the impression of mistakes or abuse where there was none.

With all that said, I like @sjrd’s draft, but I don’t think it goes far enough. And I think a lot of useful things have been said in this discussion, but a lot of it seems to be taking the strategy of trial lawyers arguing their case without grappling head-on with the difficulties.

).

).